Archive for the ‘Product Development’ Category

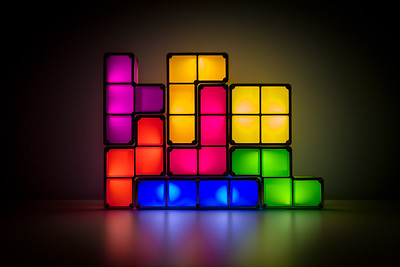

Playing Tetris With Your Project Portfolio

When planning the projects for next year, how do you decide which projects are a go and which are a no? One straightforward way is to say yes to projects when there are resources lined up to get them done and no to all others. Sure, the projects must have a good return on investment but we’re pretty good at that part. But we’re not good at saying no to projects based on real resource constraints – our people and our budgets.

When planning the projects for next year, how do you decide which projects are a go and which are a no? One straightforward way is to say yes to projects when there are resources lined up to get them done and no to all others. Sure, the projects must have a good return on investment but we’re pretty good at that part. But we’re not good at saying no to projects based on real resource constraints – our people and our budgets.

It’s likely your big projects are well-defined and well-staffed. The problem with these projects is usually the project timeline is disrespectful of the work content and the timeline is overly optimistic. If the project timeline is shorter than that of a previously completed project of a similar flavor, with a similar level of novelty and similar resource loading, the timeline is overly optimistic and the project will be late.

Project delays in the big projects block shared resources from moving onto other projects within the appropriate time window which cascades delays into those other projects. And the project resources themselves must stay on the big projects longer than planned (we knew this would happen even before the project started) which blocks the next project from starting on time and generates a second set of delays that rumble through the project portfolio. But the big projects aren’t the worst delay-generating culprits.

The corporate initiatives and infrastructure projects are usually well-staffed with centralized resources but these projects require significant work from the business units and is an incremental demand for them. And the only place the business units can get the resources is to pull them off the (too many) big projects they’ve already committed to. And remember, the timelines for those projects are overly optimistic. The big projects that were already late before the corporate initiatives and infrastructure projects are slathered on top of them are now later.

Then there are small projects that don’t look like they’ll take long to complete, but they do. And though the project plan does not call for support resources (hey, this is a small project you know), support resources are needed. These small projects drain resources from the big projects and the support resources they need. Delay on delay on delay.

Coming out of the planning process, all teams are over-booked with too many projects, too few resources, and timelines that are too short. And then the real fun begins.

Over the course of the year, new projects arise and are started even though there are already too few resources to deliver on the existing projects. Here’s a rule no one follows: If the teams are fully-loaded, new projects cannot start before old ones finish.

It makes less than no sense to start projects when resources are already triple-double booked on existing projects. This behavior has all the downside of starting a project (consumption of resources) with none of the upside (progress). And there’s another significant downside that most don’t see. The inappropriate “starting” of the new project allows the company to tell itself that progress is being made when it isn’t. All that happens is existing projects are further starved for resources and the slow pace of progress is slowed further.

It’s bad form to play Tetris with your project portfolio.

Running too many projects in parallel is not faster. In fact, it’s far slower than matching the projects to the resources on-hand to do them. It’s essential to keep in mind that there is no partial credit for starting a project. There is 100% credit for finishing a project and 0% credit for starting and running a project.

With projects, there are two simple rules. 1) Limit the number of projects by the available resources. 2) Finish a project before starting one.

Image credit – gerlos

The Best Way To Make Projects Go Faster

When there are too many projects, all the projects move too slowly.

When there are too many projects, all the projects move too slowly.

When there are too many projects, adding resources doesn’t help much and may make things worse.

To speed up the important projects, stop the less important projects. There’s no better way.

When there are too many projects, stopping comes before starting.

All projects are important, it’s just that some are more important than others. Stop the lesser ones.

When someone says all projects are equally important, they don’t understand projects.

If all projects are equally important, then they are also equally unimportant and it does not matter which projects are stopped. This twist of thinking can help people choose the right projects to stop.

When there are too many projects, stop two before starting another.

Finishing a project is the best way to stop a project, but that takes too long. Stop projects in their tracks.

There is no partial credit for a project that is 80% complete and blocking other projects. It’s okay to stop the project so others can finish.

Queueing theory says wait times increase dramatically when utilization of shared resources reaches 85%. The math says projects should be stopped well before shared resources are fully booked.

If you want to go faster, stop the lesser projects.

Image credit – Rodrigo Olivera

The Curse of Too Many Active Projects

If you want your new product development projects to go faster, reduce the number of active projects. Full stop.

If you want your new product development projects to go faster, reduce the number of active projects. Full stop.

A rule to live by: If the new product development project is 90% complete, the company gets 0% of the value. When it comes to new product development projects, there’s no partial credit.

Improving the capabilities of your project managers can help you go faster, but not if you have too many active projects.

If you want to improve the speed of decision-making around the projects, reduce the number of required decisions by reducing the number of active projects.

Resource conflicts increase radically as the number of active projects increases. To fix this, you guessed it, reduce the number of active projects.

A project that is run under the radar is the worst type of active project. It sucks resources from the official projects and prevents truth telling because no one can admit the dark project exists.

With fewer active projects, resource intensity increases, the work is done faster, and the projects launch sooner.

Shared resources serve the projects better and faster when there are fewer active projects.

If you want to go faster, there’s no question about what you should do. You should stop the lesser projects to accelerate the most important ones. Full stop.

And if you want to stop some projects, I suggest you try to answer this question: Why does your company think it’s a good idea to have far too many active new product development projects?

Image credit — JOHN K THORNE

The Difficulty of Starting New Projects

Companies that are good at planning their projects create roadmaps spanning about three years, where individual projects are sequenced to create a coordinated set of projects that fit with each other. The roadmap helps everyone know what’s important and helps the resources flow to those most important projects.

Companies that are good at planning their projects create roadmaps spanning about three years, where individual projects are sequenced to create a coordinated set of projects that fit with each other. The roadmap helps everyone know what’s important and helps the resources flow to those most important projects.

Through the planning process, the collection of potential projects is assessed and the best ones are elevated to the product roadmap. And by best, I mean the projects that will generate the most incremental profit. The projects on the roadmap generate the profits that underpin the company’s financial plan and the company is fanatically committed to the financial plan. The importance of these projects cannot be overstated. And what that means is once a project makes it to the roadmap, there’s only one way to get it off the roadmap, and that’s to complete it successfully.

For the next three years, everyone knows what they’ll work on. And they also know what they won’t work on.

The best companies want to be efficient so they staff their projects in a way that results in high utilization. The most common way to do this is to load up the roadmap with too many projects and staff the projects with too few people. The result is a significant fraction of people’s time (sometimes more than 100%) is pre-allocated to the projects on the roadmap. The efficiency metrics look good and it may actually result in many successful launches. But the downside of ultra-high utilization of resources is often forgotten.

When all your people are booked for the next three years on high-value projects, they cannot respond to new opportunities as they arise. When someone comes back from a customer visit and says, “There’s an exciting new opportunity to grow the business significantly!” the best response is “We can’t do that because all our people are committed to the three-year plan.”. The worst response is “Let’s put together a team to create a project plan and do the project.”. With the first response, the project doesn’t get done and zero resources are wasted trying to figure out how to do the project without the needed resources. With the second response, the project doesn’t get done but only after significant resources are wasted trying to figure out how to do the project without the needed resources.

Starting new projects is difficult because everyone is over-booked and over-committed on projects that the company thinks will generate significant (and predictable) profits. What this means is to start a new project in this high-utilization environment, the new project must displace a project on the three-year plan. And remember, the projects that must be displaced are the projects the company has chosen to generate the company’s future profits. So, to become an active project (and make it to the three-year plan) the candidate project must be shown to create more profits, use fewer resources and launch sooner than the projects already on the three-year plan. And this is taller than a tall order.

So, is there a solution? Not really, because the only possible solution is to reduce resource utilization to create unallocated resources that can respond to emergent opportunities when they arise. And that’s not possible because good companies have a deep and unskillful attachment efficiency.

Image credit — Bernard Spragg NZ

Reducing Time To Market vs. Improving Profits

X: We need to decrease the time to market for our new products.

X: We need to decrease the time to market for our new products.

Me: So, you want to decrease the time it takes to go from an idea to a commercialized product?

X: Yes.

Me: Okay. That’s pretty easy. Here’s my idea. Put some new stickers on the old product and relaunch it. If we change the stickers every month, we can relaunch the product every month. That will reduce the time to market to one month. The metrics will go through the roof and you’ll get promoted.

X: That won’t work. The customers will see right through that and we won’t sell more products and we won’t make more money.

Me: You never said anything about making more money. You said you wanted to reduce the time to market.

X: We want to make more money by reducing time to market.

Me: Hmm. So, you think reducing time to market is the best way to make more money?

X: Yes. Everyone knows that.

Me: Everyone? That’s a lot of people.

X: Are you going to help us make more money by reducing time to market?

Me: I won’t help you with both. If you had to choose between making more money and reducing time to market, which would you choose?

X: Making money, of course.

Me: Well, then why did you start this whole thing by asking me for help improving time to market?

X: I thought it was the best way to make more money.

Me: Can we agree that if we focus on making more money, we have a good chance of making more money?

X: Yes.

Me: Okay. Good. Do you agree we make more money when more customers buy more products from us?

X: Everyone knows that.

Me: Maybe not everyone, but let’s not split hairs because we’re on a roll here. Do you agree we make more money when customers pay more for our products?

X: Of course.

Me: There you have it. All we have to do is get more customers to buy more products and pay a higher price.

X: And you think that will work better than reducing time to market?

Me: Yes.

X: And you know how to do it?

Me: Sure do. We create new products that solve our customers’ most important problems.

X: That’s totally different than reducing time to market.

Me: Thankfully, yes. And far more profitable.

X: Will that also reduce the time to market?

Me: I thought you said you’d choose to make more money over reducing time to market. Why do you ask?

X: Well, my bonus is contingent on reducing time to market.

Me: Listen, if the previous new product development projects took two years, and you reduce the time to market to one and half years, there’s no way for you to decrease time to market by the end of the year to meet your year-end metrics and get your bonus.

X: So, the metrics for my bonus are wrong?

Me: Right.

X: What should I do?

Me: Let’s work together to launch products that solve important customer problems.

X: And what about my bonus?

Me: Let’s not worry about the bonus. Let’s worry about solving important customer problems, and the bonuses will take care of themselves.

Image credit — Quinn Dombrowski

X: Me: format stolen from @swardley. Thank you, Simon.

Which new product development project should we do first?

X: Of the pool of candidate new product development projects, which project should we do first?

X: Of the pool of candidate new product development projects, which project should we do first?

Me: Let’s do the one that makes us the most money.

X: Which project will make the most money?

Me: The one where the most customers buy the new product, pay a reasonable price, and feel good doing it.

X: And which one is that?

Me: The one that solves the most significant problem.

X: Oh, I know our company’s most significant problem. Let’s solve that one.

Me: No. Customers don’t care about our problems, they only care about their problems.

X: So, you’re saying we should solve the customers’ problem?

Me: Yes.

X: Are you sure?

Me: Yes.

X: We haven’t done that in the past. Why should we do it now?

Me: Have your previous projects generated revenue that met your expectations?

X: No, they’ve delivered less than we hoped.

Me: Well, that’s because there’s no place for hope in this game.

X: What do you mean?

Me: You can’t hope they’ll buy it. You need to know the customers’ problems and solve them.

X: Are you always like this?

Me: Only when it comes to customers and their problems.

image credit: Kyle Pearce

Three Important Choices for New Product Development Projects

Choose the right project. When you say yes to a new project, all the focus is on the incremental revenue the project will generate and none of the focus is on unrealized incremental revenue from the projects you said no to. Next time there’s a proposal to start a new project, ask the team to describe the two or three most compelling projects that they are asking the company to say no to. Grounding the go/no-go decision within the context of the most compelling projects will help you avoid the real backbreaker where you consume all your product development resources on something that scratches the wrong itch while you prevent those resources from creating something magical.

Choose the right project. When you say yes to a new project, all the focus is on the incremental revenue the project will generate and none of the focus is on unrealized incremental revenue from the projects you said no to. Next time there’s a proposal to start a new project, ask the team to describe the two or three most compelling projects that they are asking the company to say no to. Grounding the go/no-go decision within the context of the most compelling projects will help you avoid the real backbreaker where you consume all your product development resources on something that scratches the wrong itch while you prevent those resources from creating something magical.

Choose what to improve. Give your customers more of what you gave them last time unless what you gave them last time is good enough. Once goodness is good enough, giving customers more is bad business because your costs increase but their willingness to pay does not. Once your offering meets the customers’ needs in one area, lock it down and improve a different area.

Choose how to staff the projects. There is a strong temptation to run many projects in parallel. It’s almost like our objective is to maximize the number of active projects at the expense of completing them. Here’s the thing about projects – there is no partial credit for partially completed projects. Eight active projects that are eight (or eighty) percent complete generate zero revenue and have zero commercial value. For your most important project, staff it fully. Add resources until adding more resources would slow the project. Then, for your next most important project, repeat the process with your remaining resources. And once a project is completed, add those resources to the pool and start another project. This approach is especially powerful because it prioritizes finishing projects over starting them.

“Three Cows” by Sunfox is licensed under CC BY-SA 2.0.

The Most Important People in Your Company

When the fate of your company rests on a single project, who are the three people you’d tap to drag that pivotal project over the finish line? And to sharpen it further, ask yourself “Who do I want to lead the project that will save the company?” You now have a list of the three most important people in your company. Or, if you answered the second question, you now have the name of the most important person in your company.

When the fate of your company rests on a single project, who are the three people you’d tap to drag that pivotal project over the finish line? And to sharpen it further, ask yourself “Who do I want to lead the project that will save the company?” You now have a list of the three most important people in your company. Or, if you answered the second question, you now have the name of the most important person in your company.

The most important person in your company is the person that drags the most important projects over the finish line. Full stop.

When the project is on the line, the CEO doesn’t matter; the General Manager doesn’t matter; the Business Leader doesn’t matter. The person that matters most is the Project Manager. And the second and third most important people are the two people that the Project Manager relies on.

Don’t believe that? Well, take a bite of this. If the project fails, the product doesn’t sell. And if the product doesn’t sell, the revenue doesn’t come. And if the revenue doesn’t come, it’s game over. Regardless of how hard the CEO pulls, the product doesn’t launch, the revenue doesn’t come, and the company dies. Regardless of how angry the GM gets, without a product launch, there’s no revenue, and it’s lights out. And regardless of the Business Leader’s cajoling, the project doesn’t cross the finish line unless the Project Manager makes it happen.

The CEO can’t launch the product. The GM can’t launch the product. The Business Leader can’t launch the product. Stop for a minute and let that sink in. Now, go back to those three sentences and read them out loud. No, really, read them out loud. I’ll wait.

When the wheels fall off a project, the CEO can’t put them back on. Only a special Project Manager can do that.

There are tools for project management, there are degrees in project management, and there are certifications for project management. But all that is meaningless because project management is alchemy.

Degrees don’t matter. What matters is that you’ve taken over a poorly run project, turned it on its head, and dragged it across the line. What matters is you’ve run a project that was poorly defined, poorly staffed, and poorly funded and brought it home kicking and screaming. What matters is you’ve landed a project successfully when two of three engines were on fire. (Belly landings count.) What matters is that you vehemently dismiss the continuous improvement community on the grounds there can be no best practice for a project that creates something that’s new to the world. What matters is that you can feel the critical path in your chest. What matters is that you’ve sprinted toward the scariest projects and people followed you. And what matters most is they’ll follow you again.

Project Managers have won the hearts and minds of the project team.

The Project manager knows what the team needs and provides it before the team needs it. And when an unplanned need arises, like it always does, the project manager begs, borrows, and steals to secure what the team needs. And when they can’t get what’s needed, they apologize to the team, re-plan the project, reset the completion date, and deliver the bad news to those that don’t want to hear it.

If the General Manager says the project will be done in three months and the Project Manager thinks otherwise, put your money on the Project Manager.

Project Managers aren’t at the top of the org chart, but we punch above our weight. We’ve earned the trust and respect of most everyone. We aren’t liked by everyone, but we’re trusted by all. And we’re not always understood, but everyone knows our intentions are good. And when we ask for help, people drop what they’re doing and pitch in. In fact, they line up to help. They line up because we’ve gone out of our way to help them over the last decade. And they line up to help because we’ve put it on the table.

Whether it’s IoT, Digital Strategy, Industry 4.0, top-line growth, recurring revenue, new business models, or happier customers, it’s all about the projects. None of this is possible without projects. And the keystone of successful projects? You guessed it. Project Managers.

Image credit – Bernard Spragg .NZ

Innovation isn’t uncertain, it’s unknowable.

Where’s the Marketing Brief? In product development, the Marketing team creates a document that defines who will buy the new product (the customer), what needs are satisfied by the new product and how the customer will use the new product. And Marketing team also uses their crystal ball to estimate the number of units the customers will buy, when they’ll buy it and how much they’ll pay. In theory, the Marketing Brief is finalized before the engineers start their work.

Where’s the Marketing Brief? In product development, the Marketing team creates a document that defines who will buy the new product (the customer), what needs are satisfied by the new product and how the customer will use the new product. And Marketing team also uses their crystal ball to estimate the number of units the customers will buy, when they’ll buy it and how much they’ll pay. In theory, the Marketing Brief is finalized before the engineers start their work.

With innovation, there can be no Marketing Brief because there are no customers, no product and no technology to underpin it. And the needs the innovation will satisfy are unknowable because customers have not asked for the them, nor can the customer understand the innovation if you showed it to them. And how the customers will use the? That’s unknowable because, again, there are no customers and no customer needs. And how many will you sell and the sales price? Again, unknowable.

Where’s the Specification? In product development, the Marketing Brief is translated into a Specification that defines what the product must do and how much it will cost. To define what the product must do, the Specification defines a set of test protocols and their measurable results. And the minimum performance is defined as a percentage improvement over the test results of the existing product.

With innovation, there can be no Specification because there are no customers, no product, no technology and no business model. In that way, there can be no known test protocols and the minimum performance criteria are unknowable.

Where’s the Schedule? In product development, the tasks are defined, their sequence is defined and their completion dates are defined. Because the work has been done before, the schedule is a lot like the last one. Everyone knows the drill because they’ve done it before.

With innovation, there can be no schedule. The first task can be defined, but the second cannot because the second depends on the outcome of the first. If the first experiment is successful, the second step builds on the first. But if the first experiment is unsuccessful, the second must start from scratch. And if the customer likes the first prototype, the next step is clear. But if they don’t, it’s back to the drawing board. And the experiments feed the customer learning and the customer learning shapes the experiments.

Innovation is different than product development. And success in product development may work against you in innovation. If you’re doing innovation and you find yourself trying to lock things down, you may be misapplying your product development expertise. If you’re doing innovation and you find yourself trying to write a specification, you may be misapplying your product development expertise. And if you are doing innovation and find yourself trying to nail down a completion date, you are definitely misapplying your product development expertise.

With innovation, people say the work is uncertain, but to me that’s not the right word. To me, the work is unknowable. The customer is unknowable because the work hasn’t been done before. The specification is unknowable because there is nothing for comparison. And the schedule in unknowable because, again, the work hasn’t been done before.

To set expectations appropriately, say the innovation work is unknowable. You’ll likely get into a heated discuss with those who want demand a Marketing Brief, Specification and Schedule, but you’ll make the point that with innovation, the rules of product development don’t apply.

Image credit — Fatih Tuluk

The Additive Manufacturing Maturity Model

Additive Manufacturing (AM) is technology/product space with ever-increasing performance and an ever-increasing collection of products. There are many different physical principles used to add material and there are a range of part sizes that can be made ranging from micrometers to tens of meters. And there is an ever-increasing collection of materials that can be deposited from water soluble plastics to exotic metals to specialty ceramics.

Additive Manufacturing (AM) is technology/product space with ever-increasing performance and an ever-increasing collection of products. There are many different physical principles used to add material and there are a range of part sizes that can be made ranging from micrometers to tens of meters. And there is an ever-increasing collection of materials that can be deposited from water soluble plastics to exotic metals to specialty ceramics.

But AM tools and technologies don’t deliver value on their own. In order to deliver value, companies must deploy AM to solve problems and implement solutions. But where to start? What to do next? And how do you know when you’ve arrived?

To help with your AM journey, below a maturity model for AM. There are eight categories, each with descriptions of increasing levels of maturity. To start, baseline your company in the eight categories and then, once positioned, look to the higher levels of maturity for suggestions on how to move forward.

For a more refined calibration, a formal on-site assessment is available as well as a facilitated process to create and deploy an AM build-out plan. For information on on-site assessment and AM deployment, send me a note at mike@shipulski.com.

Execution

- Specify AM machine – There a many types of AM machines. Learn to choose the right machine.

- Justify AM machine – Define the problem to be solved and the benefit of solving it.

- Budget for AM machine – Find a budget and create a line item.

- Pay for machine – Choose the supplier and payment method – buy it, rent to own, credit card.

- Install machine – Choose location, provide necessary inputs and connectivity

- Create shapes/add material – Choose the right CAD system for the job, make the parts.

- Create support/service systems – Administer the job queue, change the consumables, maintenance.

- Security – Create a system for CAD files and part files to move securely throughout the organization.

- Standardize – Once the first machines are installed, converge on a small set of standard machines.

- Teach/Train – Create training material for running AM machine and creating shapes.

Solution

- Copy/Replace – Download a shape from the web and make a copy or replace a broken part.

- Adapt/Improve – Add a new feature or function, change color, improve performance.

- Create/Learn – Create something new, show your team, show your customers.

- Sell Products/Services – Sell high volume AM-produced products for a profit. (Stretch goal.)

Volume

- Make one part – Make one part and be done with it.

- Make five parts – Make a small number of parts and learn support material is a challenge.

- Make fifty parts – Make more than a handful of parts. Filament runs out, machines clog and jam.

- Make parts with a complete manufacturing system – This topic deserves a post all its own.

Complexity

- Make a single piece – Make one part.

- Make a multi-part assembly – Make multiple parts and fasten them together.

- Make a building block assembly – Make blocks that join to form an assembly larger than the build area.

- Consolidate – Redesign an assembly to consolidate multiple parts into fewer.

- Simplify – Redesign the consolidated assembly to eliminate features and simplify it.

Material

- Plastic – Low temperature plastic, multicolor plastics, high performance plastics.

- Metal – Low melting temperature with low conductivity, higher melting temps, higher conductivity

- Ceramics – common materials with standard binders, crazy materials with crazy binders.

- Hybrid – multiple types of plastics in a single part, multiple metals in one part, custom metal alloy.

- Incompatible materials – Think oil and water.

Scale

- 50 mm – Not too large and not too small. Fits the build area of medium-sized machine.

- 500 mm – Larger than the build area of medium-sized machine.

- 5 m – Requires a large machine or joining multiple parts in a building block way.

- 0.5 mm – Tiny parts, tiny machines, superior motion control and material control.

Organizational Breadth

- Individuals – Early adopters operate in isolation.

- Teams – Teams of early adopters gang together and spread the word.

- Functions – Functional groups band together to advance their trade.

- Supply Chain – Suppliers and customers work together to solve joint problems.

- Business Units – Whole business units spread AM throughout the body of their work.

- Company – Whole company adopts AM and deploys it broadly.

Strategic Importance

- Novelty – Early adopters think it’s cool and learn what AM can do.

- Point Solution – AM solves an important problem.

- Speed – AM speeds up the work.

- Profitability – AM improves profitability.

- Initiative – AM becomes an initiative and benefits are broadly multiplied.

- Competitive Advantage – AM generates growth and delivers on Vital Business Objectives (VBOs).

Image credit – Cheryl

Quantification of Novel, Useful and Successful

Argument is unskillful but analysis is skillful. And what’s needed for analysis is a framework and some good old-fashioned quantification. To create the supporting conditions for an analysis around novelty, usefulness, and successfulness, I’ve created quantifiable indices and a process to measure them. The process starts with a prototype of a new product, service or business model which is shown to potential customers (real people who do work in the space of interest.)

The Novelty Index. The Novelty Index measures the difference of a product, service or business model from the state-of-the-art. Travel to the potential customer and hand them the prototype. With mouth closed and eyes open, watch them use the product or interact with the service. Measure the time it takes them to recognize the novelty of the prototype and assign a value from 0 to 5. (Higher is better.)

5 – Novelty is recognized immediately after a single use (within 5 seconds.)

4 – Novelty is recognized after several uses (30 seconds.)

3 – Novelty is recognized once a pattern emerges (10-30 minutes.)

2 – Novelty is recognized the next day, once the custom has time to sleep on it (24 hours.)

1 – A formalized A-B test with statistical analysis is needed (1 week.)

0 – The customer says there’s no difference. Stop the project and try something else.

The Usefulness Index. The Usefulness Index measures the level of importance of the novelty. Once the customer recognizes the novelty, take the prototype away from them and evaluate their level of anger.

5 – The customer is irate and seething. They rip it from your arms and demand to place an order for 50 units.

4 – The customer is deeply angry and screams at you to give it back. Then they tell you they want to buy the prototype.

3 – With a smile of happiness, the customer asks to try the prototype again.

2 – The customer asks a polite question about the prototype to make you feel a bit better about their lack of interest.

1 – The customer is indifferent and says it’s time to get some lunch.

0 – Before you ask, the customer hands it back to you before you and is happy not to have it. Stop the project and try something else.

The Successfulness Index. The Successfulness Index measures the incremental profitability the novel product, service or business model will deliver to your company’s bottom line. After taking the prototype from the customer and measuring the Usefulness Index, with your prototype in hand, ask the customer how much they’d pay for the prototype in its current state.

5 – They’d pay 10 times your estimated cost.

4 – They’d pay two times your estimated cost.

3 – They’d pay 30% more than your estimated cost.

2 – They’d pay 10% more than your estimated cost.

1 – They’d pay you 5% more than your estimated cost.

0 – They don’t answer because they would never buy it.

The Commercialization Index. The Commercialization Index describes the overall significance of the novel product, service or business model and it’s calculated by multiplying the three indicies. The maximum value is 125 (5 x 5 x 5) and the minimum value is 0. Again, higher is better.

The descriptions of the various levels are only examples, and you can change them any way you want. And you can change the value ranges as you see fit. (0-5 is just one way to do it.) And you can substitute actual prototypes with sketches, storyboards or other surrogates.

Modify it as you wish, and make it your own. I hope you find it helpful.

Image credit – Nisarg Lakhmani

Mike Shipulski

Mike Shipulski