Posts Tagged ‘Product Development Engine’

How flexible are your processes and how do you know?

What would happen if the factory had to support demand that increased one percent per week? Without incremental investment, how many weeks could they meet the ever-increasing demand? That number is a measure of the system’s flexibility. More weeks, more flexibility. And the element of the manufacturing system that gives out first is the constraint. So, now you know how much demand you can support before there’s a problem and you know what the problem will be. And if you know the lead time to implement the improvement needed to support the increased demand, in a reverse-scheduling way, you know when to implement the improvement so it comes online when you need it.

What would happen if the factory had to support demand that increased one percent per week? Without incremental investment, how many weeks could they meet the ever-increasing demand? That number is a measure of the system’s flexibility. More weeks, more flexibility. And the element of the manufacturing system that gives out first is the constraint. So, now you know how much demand you can support before there’s a problem and you know what the problem will be. And if you know the lead time to implement the improvement needed to support the increased demand, in a reverse-scheduling way, you know when to implement the improvement so it comes online when you need it.

What would happen if the factory had to support demand that increased one percent in a week? How about two percent in a week, five percent, or ten percent? Without incremental investment, what percentage increase could they support in a single week? More percent increase, more flexibility. And the element of the manufacturing system that gives out first is the constraint. So, now you know how much increased demand you can support in a single week and you know the gating item that will block further increases. You know now where to clip the increased demand and push the extra demand into the next week. And you know the investment it would take to support a larger increase in a single week.

These two scenarios can be used to assess and quantify a process of any type. For example, to understand the flexibility of the new product development process, load it (virtually) with more projects to see where it breaks. Make a note of what it would take to increase the system’s flexibility and ask yourself if that’s a good investment. If it is, make that investment. If it isn’t, don’t.

This simple testing method is especially useful when the investment needed to increase flexibility has a long lead time or is expensive. If your testing says the system can support five percent more demand before it breaks and you know that demand will hit the system in ten weeks, I hope the lead time to implement the needed improvement is less than ten weeks. If not, you won’t be able to meet the increased demand. And I hope the money to make the improvement is already budgeted because a budgeting cycle is certainly longer than ten weeks and you can’t buy what you need if the money isn’t in the budget.

The first question to ask yourself is what is the minimum flexibility of the system that will trigger the next investment to improve throughput and increase flexibility? And the follow-on question: What is needed to improve throughput? What is the lead time for that solution? How much will it cost? Is the money budgeted? And do we have the resources (people) that can implement the improvement when it’s time?

When the cost of not meeting demand is high, the value of this testing process is high. When the lead times for the improvements are long, this testing process has a lot of value because it gives you time to put the improvements in place.

Continuous improvement of process utilization is also a continuous reduction of process flexibility. This simple testing approach can help identify when process flexibility is becoming dangerously low and give you the much-needed time to put improvements in place before it’s too late.

Image credit — Tambako The Jaguar

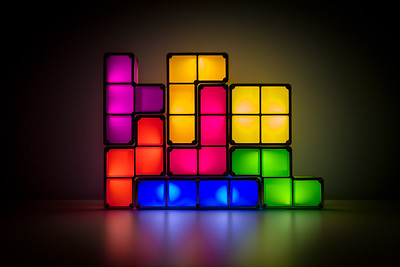

Playing Tetris With Your Project Portfolio

When planning the projects for next year, how do you decide which projects are a go and which are a no? One straightforward way is to say yes to projects when there are resources lined up to get them done and no to all others. Sure, the projects must have a good return on investment but we’re pretty good at that part. But we’re not good at saying no to projects based on real resource constraints – our people and our budgets.

When planning the projects for next year, how do you decide which projects are a go and which are a no? One straightforward way is to say yes to projects when there are resources lined up to get them done and no to all others. Sure, the projects must have a good return on investment but we’re pretty good at that part. But we’re not good at saying no to projects based on real resource constraints – our people and our budgets.

It’s likely your big projects are well-defined and well-staffed. The problem with these projects is usually the project timeline is disrespectful of the work content and the timeline is overly optimistic. If the project timeline is shorter than that of a previously completed project of a similar flavor, with a similar level of novelty and similar resource loading, the timeline is overly optimistic and the project will be late.

Project delays in the big projects block shared resources from moving onto other projects within the appropriate time window which cascades delays into those other projects. And the project resources themselves must stay on the big projects longer than planned (we knew this would happen even before the project started) which blocks the next project from starting on time and generates a second set of delays that rumble through the project portfolio. But the big projects aren’t the worst delay-generating culprits.

The corporate initiatives and infrastructure projects are usually well-staffed with centralized resources but these projects require significant work from the business units and is an incremental demand for them. And the only place the business units can get the resources is to pull them off the (too many) big projects they’ve already committed to. And remember, the timelines for those projects are overly optimistic. The big projects that were already late before the corporate initiatives and infrastructure projects are slathered on top of them are now later.

Then there are small projects that don’t look like they’ll take long to complete, but they do. And though the project plan does not call for support resources (hey, this is a small project you know), support resources are needed. These small projects drain resources from the big projects and the support resources they need. Delay on delay on delay.

Coming out of the planning process, all teams are over-booked with too many projects, too few resources, and timelines that are too short. And then the real fun begins.

Over the course of the year, new projects arise and are started even though there are already too few resources to deliver on the existing projects. Here’s a rule no one follows: If the teams are fully-loaded, new projects cannot start before old ones finish.

It makes less than no sense to start projects when resources are already triple-double booked on existing projects. This behavior has all the downside of starting a project (consumption of resources) with none of the upside (progress). And there’s another significant downside that most don’t see. The inappropriate “starting” of the new project allows the company to tell itself that progress is being made when it isn’t. All that happens is existing projects are further starved for resources and the slow pace of progress is slowed further.

It’s bad form to play Tetris with your project portfolio.

Running too many projects in parallel is not faster. In fact, it’s far slower than matching the projects to the resources on-hand to do them. It’s essential to keep in mind that there is no partial credit for starting a project. There is 100% credit for finishing a project and 0% credit for starting and running a project.

With projects, there are two simple rules. 1) Limit the number of projects by the available resources. 2) Finish a project before starting one.

Image credit – gerlos

The Best Way To Make Projects Go Faster

When there are too many projects, all the projects move too slowly.

When there are too many projects, all the projects move too slowly.

When there are too many projects, adding resources doesn’t help much and may make things worse.

To speed up the important projects, stop the less important projects. There’s no better way.

When there are too many projects, stopping comes before starting.

All projects are important, it’s just that some are more important than others. Stop the lesser ones.

When someone says all projects are equally important, they don’t understand projects.

If all projects are equally important, then they are also equally unimportant and it does not matter which projects are stopped. This twist of thinking can help people choose the right projects to stop.

When there are too many projects, stop two before starting another.

Finishing a project is the best way to stop a project, but that takes too long. Stop projects in their tracks.

There is no partial credit for a project that is 80% complete and blocking other projects. It’s okay to stop the project so others can finish.

Queueing theory says wait times increase dramatically when utilization of shared resources reaches 85%. The math says projects should be stopped well before shared resources are fully booked.

If you want to go faster, stop the lesser projects.

Image credit – Rodrigo Olivera

You are defined by the problems you solve.

You can solve problems that reduce the material costs of your products.

You can solve problems that reduce the material costs of your products.

You can solve problems that reduce the number of people that work at your company.

You can solve problems that save your company money.

You can solve problems that help your customers make progress.

You can solve problems that make it easier for your customers to buy from you.

You can solve too many small problems and too few big problems.

You can solve problems that ripple profits through your whole organization.

You can solve local problems.

You can solve problems that obsolete your best products.

You can solve problems that extend and defend your existing products.

You can solve problems that spawn new businesses.

You can solve the wrong problems.

You can solve problems before their time or after it is too late.

You can solve problems that change your company or block it from change.

You are defined by the problems you solve. So, which type of problems do you solve and how do you feel about that?

Image credit – Maureen Barlin

The Curse of Too Many Active Projects

If you want your new product development projects to go faster, reduce the number of active projects. Full stop.

If you want your new product development projects to go faster, reduce the number of active projects. Full stop.

A rule to live by: If the new product development project is 90% complete, the company gets 0% of the value. When it comes to new product development projects, there’s no partial credit.

Improving the capabilities of your project managers can help you go faster, but not if you have too many active projects.

If you want to improve the speed of decision-making around the projects, reduce the number of required decisions by reducing the number of active projects.

Resource conflicts increase radically as the number of active projects increases. To fix this, you guessed it, reduce the number of active projects.

A project that is run under the radar is the worst type of active project. It sucks resources from the official projects and prevents truth telling because no one can admit the dark project exists.

With fewer active projects, resource intensity increases, the work is done faster, and the projects launch sooner.

Shared resources serve the projects better and faster when there are fewer active projects.

If you want to go faster, there’s no question about what you should do. You should stop the lesser projects to accelerate the most important ones. Full stop.

And if you want to stop some projects, I suggest you try to answer this question: Why does your company think it’s a good idea to have far too many active new product development projects?

Image credit — JOHN K THORNE

The Difficulty of Starting New Projects

Companies that are good at planning their projects create roadmaps spanning about three years, where individual projects are sequenced to create a coordinated set of projects that fit with each other. The roadmap helps everyone know what’s important and helps the resources flow to those most important projects.

Companies that are good at planning their projects create roadmaps spanning about three years, where individual projects are sequenced to create a coordinated set of projects that fit with each other. The roadmap helps everyone know what’s important and helps the resources flow to those most important projects.

Through the planning process, the collection of potential projects is assessed and the best ones are elevated to the product roadmap. And by best, I mean the projects that will generate the most incremental profit. The projects on the roadmap generate the profits that underpin the company’s financial plan and the company is fanatically committed to the financial plan. The importance of these projects cannot be overstated. And what that means is once a project makes it to the roadmap, there’s only one way to get it off the roadmap, and that’s to complete it successfully.

For the next three years, everyone knows what they’ll work on. And they also know what they won’t work on.

The best companies want to be efficient so they staff their projects in a way that results in high utilization. The most common way to do this is to load up the roadmap with too many projects and staff the projects with too few people. The result is a significant fraction of people’s time (sometimes more than 100%) is pre-allocated to the projects on the roadmap. The efficiency metrics look good and it may actually result in many successful launches. But the downside of ultra-high utilization of resources is often forgotten.

When all your people are booked for the next three years on high-value projects, they cannot respond to new opportunities as they arise. When someone comes back from a customer visit and says, “There’s an exciting new opportunity to grow the business significantly!” the best response is “We can’t do that because all our people are committed to the three-year plan.”. The worst response is “Let’s put together a team to create a project plan and do the project.”. With the first response, the project doesn’t get done and zero resources are wasted trying to figure out how to do the project without the needed resources. With the second response, the project doesn’t get done but only after significant resources are wasted trying to figure out how to do the project without the needed resources.

Starting new projects is difficult because everyone is over-booked and over-committed on projects that the company thinks will generate significant (and predictable) profits. What this means is to start a new project in this high-utilization environment, the new project must displace a project on the three-year plan. And remember, the projects that must be displaced are the projects the company has chosen to generate the company’s future profits. So, to become an active project (and make it to the three-year plan) the candidate project must be shown to create more profits, use fewer resources and launch sooner than the projects already on the three-year plan. And this is taller than a tall order.

So, is there a solution? Not really, because the only possible solution is to reduce resource utilization to create unallocated resources that can respond to emergent opportunities when they arise. And that’s not possible because good companies have a deep and unskillful attachment efficiency.

Image credit — Bernard Spragg NZ

The Mighty Capacity Model

There are natural limits to the amount of work that any one person or group can do. And once that limit is reached, saying yes to more work does not increase the amount of work that gets done. Sure, you kick the can down the road when you say yes to work that you know you can’t get done, but that’s not helpful. Expectations are set inappropriately which secures future disappointment and more importantly binds or blocks other resources. When preparatory work is done for something that was never going to happen, that prep work is pure waste. And when resources are allocated to a future project that was never going to happen, the results are misalignment, mistiming, and replanning, and opportunity cost carries the day.

There are natural limits to the amount of work that any one person or group can do. And once that limit is reached, saying yes to more work does not increase the amount of work that gets done. Sure, you kick the can down the road when you say yes to work that you know you can’t get done, but that’s not helpful. Expectations are set inappropriately which secures future disappointment and more importantly binds or blocks other resources. When preparatory work is done for something that was never going to happen, that prep work is pure waste. And when resources are allocated to a future project that was never going to happen, the results are misalignment, mistiming, and replanning, and opportunity cost carries the day.

But how to know if you the team has what it takes to get the work done? The answer is a capacity model. There are many types of capacity models, but they all require a list of the available resources (people, tools, machines), the list of work to be done (projects), and the amount of time (in hours, weeks, months) each project requires for each resource. The best place to start is to create a simple spreadsheet where the leftmost column lists the names of the people and the resources (e.g., labs, machines, computers, tools). Across the top row of the spreadsheet enter the names of the projects. For the first project, go down the list of people and resources, and for each person/resource required for the project, type an X in the column. Repeat the process for the remainder of the projects.

While this spreadsheet is not a formal capacity model, as it does not capture the number of hours each project requires from the resources, it’s plenty good enough to help you understand if you have a problem. If a person has only one X in their row, only one project requires their time and they can work full-time on that project for the whole year. If another person has sixteen Xs in their row, that’s a big problem. If a machine has no Xs in its row, no projects require that machine, and its capacity can be allocated to other projects across the company. And if a machine has twenty Xs in its row, that’s a big problem.

This simple spreadsheet gives a one-page, visual description of the team’s capacity. Held at arm’s length, the patterns made by the Xs tell the whole story.

To take this spreadsheet to the next level, the Xs can be replaced with numbers that represent the number of weeks each project requires from the people and resources. Sit down with each person and for each X in their row, ask them how many weeks each project will consume. For example, if they are supposed to support three projects, X1 is replaced with 15 (weeks), X2 is replaced with 5, and X3 is replaced with 5 for a total of 25 weeks (15 + 5 + 5). This means the person’s capacity is about 50% consumed (25 weeks / 50 weeks per year) by the three projects. For each resource, ask the resource owner how much time each project requires from the resource. For a machine that is needed for ten projects where each project requires twenty weeks, the machine does not have enough capacity to support the projects. The calculation says the project load requires 200 machine-weeks (10*20 = 200 weeks) and four machines (200 machine-weeks / 50 weeks per year = 4 machines) are required.

Creating a spreadsheet that lists all the projects is helpful in its own right. And you’ll probably learn that there are far more projects than anyone realizes. (Helpful hint: make sure you ask three times if all the projects are listed on the spreadsheet.) And asking people how much time is required for each project is respectful of their knowledge and skillful because they know best how long the work will take. They’ll feel good about all that. And quantifying the number of weeks (or hours) each project requires elevates the discussion from argument to analysis.

With this simple capacity model, the team can communicate clearly which projects can be supported and which cannot. And, where there’s a shortfall, the team can make a list of the additional resources that would be needed to support the full project load.

Fight the natural urge to overcomplicate the first version of the capacity model. Start with a simple project-people/resource spreadsheet and use the Xs. And use the conversations to figure out how to improve it for next time.

Which new product development project should we do first?

X: Of the pool of candidate new product development projects, which project should we do first?

X: Of the pool of candidate new product development projects, which project should we do first?

Me: Let’s do the one that makes us the most money.

X: Which project will make the most money?

Me: The one where the most customers buy the new product, pay a reasonable price, and feel good doing it.

X: And which one is that?

Me: The one that solves the most significant problem.

X: Oh, I know our company’s most significant problem. Let’s solve that one.

Me: No. Customers don’t care about our problems, they only care about their problems.

X: So, you’re saying we should solve the customers’ problem?

Me: Yes.

X: Are you sure?

Me: Yes.

X: We haven’t done that in the past. Why should we do it now?

Me: Have your previous projects generated revenue that met your expectations?

X: No, they’ve delivered less than we hoped.

Me: Well, that’s because there’s no place for hope in this game.

X: What do you mean?

Me: You can’t hope they’ll buy it. You need to know the customers’ problems and solve them.

X: Are you always like this?

Me: Only when it comes to customers and their problems.

image credit: Kyle Pearce

Three Important Choices for New Product Development Projects

Choose the right project. When you say yes to a new project, all the focus is on the incremental revenue the project will generate and none of the focus is on unrealized incremental revenue from the projects you said no to. Next time there’s a proposal to start a new project, ask the team to describe the two or three most compelling projects that they are asking the company to say no to. Grounding the go/no-go decision within the context of the most compelling projects will help you avoid the real backbreaker where you consume all your product development resources on something that scratches the wrong itch while you prevent those resources from creating something magical.

Choose the right project. When you say yes to a new project, all the focus is on the incremental revenue the project will generate and none of the focus is on unrealized incremental revenue from the projects you said no to. Next time there’s a proposal to start a new project, ask the team to describe the two or three most compelling projects that they are asking the company to say no to. Grounding the go/no-go decision within the context of the most compelling projects will help you avoid the real backbreaker where you consume all your product development resources on something that scratches the wrong itch while you prevent those resources from creating something magical.

Choose what to improve. Give your customers more of what you gave them last time unless what you gave them last time is good enough. Once goodness is good enough, giving customers more is bad business because your costs increase but their willingness to pay does not. Once your offering meets the customers’ needs in one area, lock it down and improve a different area.

Choose how to staff the projects. There is a strong temptation to run many projects in parallel. It’s almost like our objective is to maximize the number of active projects at the expense of completing them. Here’s the thing about projects – there is no partial credit for partially completed projects. Eight active projects that are eight (or eighty) percent complete generate zero revenue and have zero commercial value. For your most important project, staff it fully. Add resources until adding more resources would slow the project. Then, for your next most important project, repeat the process with your remaining resources. And once a project is completed, add those resources to the pool and start another project. This approach is especially powerful because it prioritizes finishing projects over starting them.

“Three Cows” by Sunfox is licensed under CC BY-SA 2.0.

Want to succeed? Learn how to deliver customer value.

Whatever your initiative, start with customer value. Whatever your project, base it on customer value. And whatever your new technology, you guessed it, customer value should be front and center.

Whatever your initiative, start with customer value. Whatever your project, base it on customer value. And whatever your new technology, you guessed it, customer value should be front and center.

Whenever the discussion turns to customer value, expect confusion, disagreement, and, likely, anger. To help things move forward, here’s an operational definition I’ve found helpful:

When they buy it for more than your cost to make it, you have customer value.

And when there’s no way to pull out of the death spiral of disagreement, use this operational definition to avoid (or stop) bad projects:

When no one will buy it, you don’t have customer value and it’s a bad project.

As two words, customer and value don’t seem all that special. But, when you put them together, they become words to live by. But, also, when you do put them together, things get complicated. Here’s why.

To provide customer value, you’ve got to know (and name) the customer. When you asked “Who is the customer?” the wheels fall off. Here are some wrong answers to that tricky question. The Board of Directors is the customer. The shareholders are the customers. The distributor is the customer. The OEM that integrates your product is the customer. And the people that use the product are the customer. Here’s an operational definition that will set you free:

When someone buys it, they are the customer.

When the discussions get sticky, hold onto that definition. Others will try to bait you into thinking differently, but don’t bite. It will be difficult to stand your ground. And if you feel the group is headed in the wrong direction, try to set things right with this operational definition:

When you’ve found the person who opens their wallet, you’ve found the customer.

Now, let’s talk about value. Isn’t value subjective? Yes, it is. And the only opinion that matters is the customer’s. And here’s an operational definition to help you create customer value:

When you solve an important customer problem, they find it valuable.

And there you have it. Putting it all together, here’s the recipe for customer value:

- Understand who will buy it.

- Understand their work and identify their biggest problem.

- Solve their problem and embed it in your offering.

- Sell it for more than it costs you to make it.

Image credit — Caroline

The Most Important People in Your Company

When the fate of your company rests on a single project, who are the three people you’d tap to drag that pivotal project over the finish line? And to sharpen it further, ask yourself “Who do I want to lead the project that will save the company?” You now have a list of the three most important people in your company. Or, if you answered the second question, you now have the name of the most important person in your company.

When the fate of your company rests on a single project, who are the three people you’d tap to drag that pivotal project over the finish line? And to sharpen it further, ask yourself “Who do I want to lead the project that will save the company?” You now have a list of the three most important people in your company. Or, if you answered the second question, you now have the name of the most important person in your company.

The most important person in your company is the person that drags the most important projects over the finish line. Full stop.

When the project is on the line, the CEO doesn’t matter; the General Manager doesn’t matter; the Business Leader doesn’t matter. The person that matters most is the Project Manager. And the second and third most important people are the two people that the Project Manager relies on.

Don’t believe that? Well, take a bite of this. If the project fails, the product doesn’t sell. And if the product doesn’t sell, the revenue doesn’t come. And if the revenue doesn’t come, it’s game over. Regardless of how hard the CEO pulls, the product doesn’t launch, the revenue doesn’t come, and the company dies. Regardless of how angry the GM gets, without a product launch, there’s no revenue, and it’s lights out. And regardless of the Business Leader’s cajoling, the project doesn’t cross the finish line unless the Project Manager makes it happen.

The CEO can’t launch the product. The GM can’t launch the product. The Business Leader can’t launch the product. Stop for a minute and let that sink in. Now, go back to those three sentences and read them out loud. No, really, read them out loud. I’ll wait.

When the wheels fall off a project, the CEO can’t put them back on. Only a special Project Manager can do that.

There are tools for project management, there are degrees in project management, and there are certifications for project management. But all that is meaningless because project management is alchemy.

Degrees don’t matter. What matters is that you’ve taken over a poorly run project, turned it on its head, and dragged it across the line. What matters is you’ve run a project that was poorly defined, poorly staffed, and poorly funded and brought it home kicking and screaming. What matters is you’ve landed a project successfully when two of three engines were on fire. (Belly landings count.) What matters is that you vehemently dismiss the continuous improvement community on the grounds there can be no best practice for a project that creates something that’s new to the world. What matters is that you can feel the critical path in your chest. What matters is that you’ve sprinted toward the scariest projects and people followed you. And what matters most is they’ll follow you again.

Project Managers have won the hearts and minds of the project team.

The Project manager knows what the team needs and provides it before the team needs it. And when an unplanned need arises, like it always does, the project manager begs, borrows, and steals to secure what the team needs. And when they can’t get what’s needed, they apologize to the team, re-plan the project, reset the completion date, and deliver the bad news to those that don’t want to hear it.

If the General Manager says the project will be done in three months and the Project Manager thinks otherwise, put your money on the Project Manager.

Project Managers aren’t at the top of the org chart, but we punch above our weight. We’ve earned the trust and respect of most everyone. We aren’t liked by everyone, but we’re trusted by all. And we’re not always understood, but everyone knows our intentions are good. And when we ask for help, people drop what they’re doing and pitch in. In fact, they line up to help. They line up because we’ve gone out of our way to help them over the last decade. And they line up to help because we’ve put it on the table.

Whether it’s IoT, Digital Strategy, Industry 4.0, top-line growth, recurring revenue, new business models, or happier customers, it’s all about the projects. None of this is possible without projects. And the keystone of successful projects? You guessed it. Project Managers.

Image credit – Bernard Spragg .NZ

Mike Shipulski

Mike Shipulski